How Our NCAA Bracket Picks Did In 2017: They Won A Lot!

March 6, 2018 - by David Hess

2017 was a really good year for our brackets

We are the only site that collects and publishes extensive data on how our bracket pick advice performs in real-world bracket contests.

Every year after the NCAA tournament ends, we ask our NCAA Bracket Picks customers how our recommended brackets finished in the bracket pools they entered.

This post breaks down our 2017 results.

Editor’s Note: Our 2018 NCAA Bracket Picks are now available for purchase, with some great deals available. See all March Madness packages.

91% of our customers won a prize in a 2017 bracket pool.

That’s not a typo. After 2008 (a year in which we successfully predicted all four Final Four teams, both Finalists, and the NCAA champion), 2017 was likely the second best year ever for our data-driven bracket picking advice.

Based on our post-tournament customer survey, here’s how our bracket picks delivered an edge in 2017:

- 91% of our customers won a prize in at least one bracket pool, compared to an expectation of 20%.

- Our customers won a prize in 72% of the bracket pools they entered (nearly 3 out of every 4), compared to an expectation of 10%.

In other words, compared to expectations, our NCAA Bracket Picks customers were more than 7 times as likely to win a prize in any bracket pool they entered.

Note: When we say “compared to expectations,” we mean compared to how often a customer would be expected to win a bracket pool prize if they hadn’t used our picks, and assuming every person entering their pool is equally skilled. See Appendix 2 at the bottom of the post for more info.

An estimated $2.5 million in winnings

Those results are, obviously, fantastic. Winning a prize in a bracket pool typically earns you a very large return on your pool entry fees, and performances like this year’s are the “big hits” that not only generate outsized profits, but also fund many future years of pool entry fees.

And just to put a number on that profits part: Roughly 30% of our customers responded to our survey this year (a solid response rate for email surveys), and they reported a total of just over $837,000 in bracket pool winnings.

Extrapolating that figure out to our entire customer base implies that over $2.5 million in bracket pool prizes was won using our bracket picks this year.

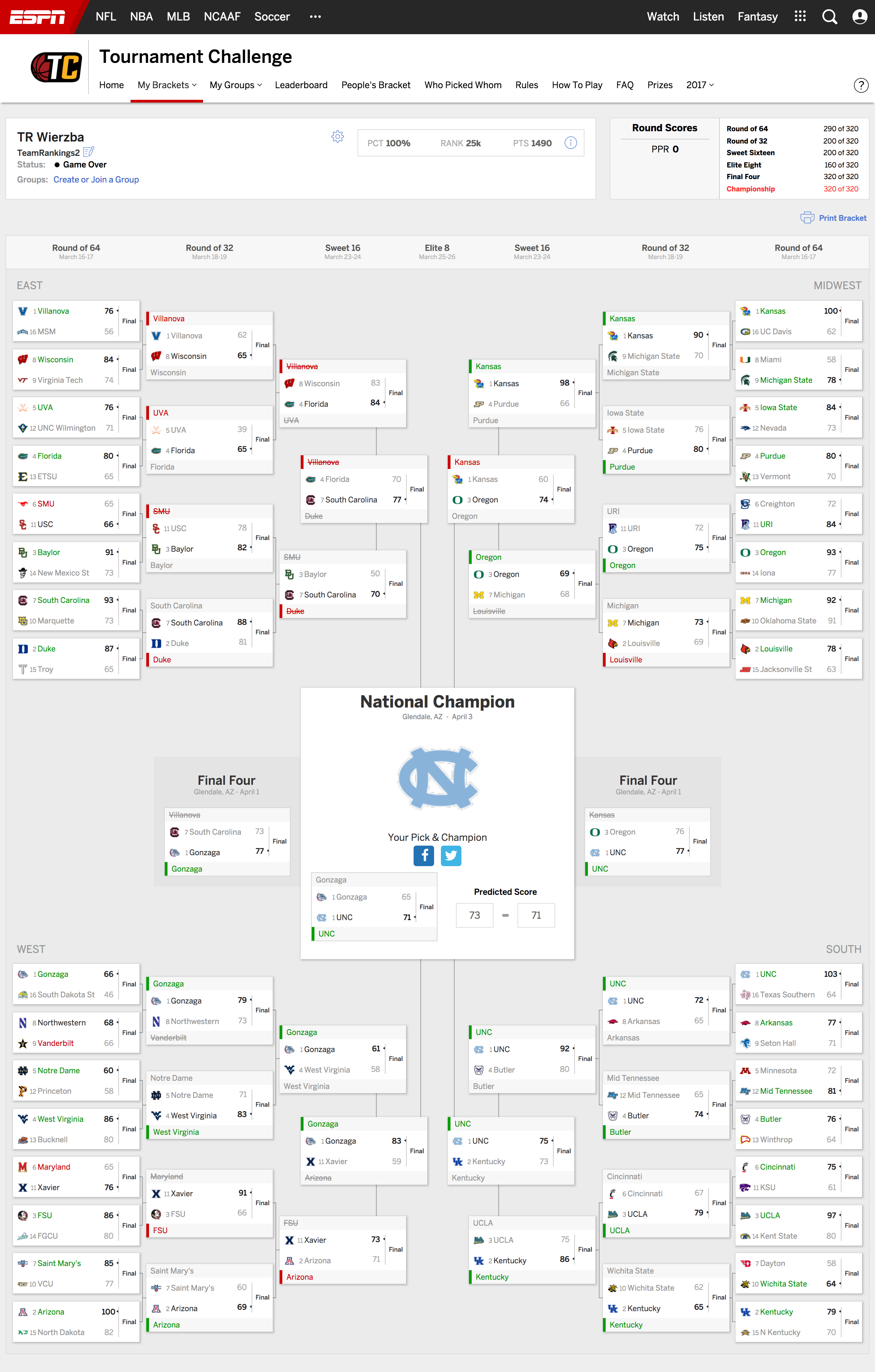

Example of a top-performing bracket

Before we dig into detailed performance numbers and explanations, here’s an example of one of our recommended 2017 brackets. This bracket was for a 14-person pool with a traditional 1-2-4-8-16-32 scoring system, entered into ESPN’s Tournament Challenge contest.

As you can see, it finished in the 100th percentile, beating 99.87% of the nearly 19 million brackets entered on ESPN.

What worked for us in 2017

Our performance in 2017 demonstrated the effectiveness of our value- and portfolio-based approaches to winning bracket pools. Three factors in particular appeared to drive our success:

1. Our Survival Odds nailed the title game

Our algorithmic tournament predictions forecast North Carolina as the most likely team to win the tournament, and Gonzaga as the second most likely tournament champion.

No other major college basketball prediction site even forecast UNC as the most likely champ, let alone also getting the runner-up correct:

Site Most Likely Champ Most Likely Runner Up UNC Champ Rank UNC Champ Odds

TeamRankings UNC Gonzaga 1st 15.2%

ESPN BPI Gonzaga UNC 2nd 15.0%

NumberFire Gonzaga UNC 2nd 14.3%

The Power Rank Gonzaga UNC 3rd 10.5%

KenPom Gonzaga UNC 3rd 9.9%

FiveThirtyEight Villanova Kansas 5th 7.0%

As a result:

- 81% of our Best Brackets* featured North Carolina as the champion pick

- 99.5% of our Best Brackets featured North Carolina advancing to at least the title game

- 89% of our Best Brackets featured a title game matchup of North Carolina vs. Gonzaga

*A “Best Bracket” is the top custom bracket suggested to a customer for their specific pool, taking into account their scoring system and pool size.

FYI, the handful of Best Brackets we recommended that didn’t feature a UNC-Gonzaga title game were for pools that met one or both of the following criteria:

- They had lots of entries (e.g. thousands), so taking a calculated risk on more of a long shot team made sense from a bracket differentiation perspective

- They had scoring systems that heavily rewarded lower seeds winning in later rounds, so the reward of picking a long shot team to make a deep run outweighed the risks

But even in these types of pools, our Alternate Brackets (designed for customers playing multiple entries in a pool) usually included at least one bracket with UNC as the champion. And in larger pools especially, many of our customers enter more than one bracket.

2. Strong early round picking made a difference (again)

Even though UNC was a relatively popular champion pick, accurate early-round pick performance gave a nice boost to our Best Brackets, especially in terms of helping them beat other opponent brackets that also had UNC as champion.

For example, in the First Round, our Best Bracket for pools with fewer than 15 entries and the traditional 1-2-4-8-16-32 scoring system went 7-for-8 on the #8 vs. #9 and #7 vs. #10 games. It also picked two other upsets by double-digit seeds, and got both of those correct.

The end result was a 29-3 record in the First Round for our Best Bracket for very small pools. In comparison, the average bracket from the general public went only 24-8.

3. Compared to the public, we “faded” #2 Louisville in most pool types

Our analysis indicated that Louisville was an overvalued team in many types of bracket pools in 2017. As a result:

- 90% of our Best Brackets featured #3 Oregon in the Elite 8

- 21% of our Best Brackets featured #7 Michigan upsetting Louisville in the Second Round

In retrospect, it would have been nice for those 90% of Best Brackets to have Oregon advancing all the way to the Final Four. Still, merely picking Oregon to make the Elite 8 gave our brackets a leg up on the majority of the general public.

Results by pool characteristics

While we’re most interested in the overall frequency with which our customers win prizes, investigating performance in different types of pools is also informative.

In our post-tournament survey, we ask our customers for information about every pool that they defined and got picks for in our system. Consequently, we can review how our resulting bracket pick recommendations did based on factors such as:

- The total number of entries in the pool

- The number of brackets the user entered in the pool

- The pool’s scoring system

Results by pool size

The results by pool size look about as we’d expect. As pool size increases, absolute win rate decreases but the edge our picks provide rises.

Pool Size Expected To Win A Prize Actually Won A Prize Win Rate vs. Expectation

10 or fewer entries 23% 88% 3.8x

11 to 30 entries 15% 85% 5.9x

31 to 50 entries 11% 85% 7.7x

51 to 100 entries 9% 76% 8.3x

101 to 250 entries 6% 64% 10.8x

251 to 1000 entries 5% 61% 13.4x

1001 to 9999 entries 2% 25% 14.2x

10,000 or more entries* 0.5% 3% 5.7x

Grand Total 10% 72% 7.0x

*Our system didn’t let people enter a pool size of more than 10,000, so this is a catch-all bin for really giant pools. We ask customers to name their pools, and many of the names for pools this large are “ESPN,” “CBS,” “Yahoo!,” etc. In other words, many of these pools were likely our customers competing for some major site’s grand prize against millions of other brackets, so the true expected win rate is probably much, much lower than 0.5%. Given our likely overestimation of the expected win rate, our lower apparent edge here is not a big surprise.

Small pools

In a small pool, getting your champion pick correct goes a long way towards winning a prize, in standard scoring systems.

Given our forecast of UNC as the most likely champ, we expected a lot of our customers in pools with 50 or fewer entries to do win a prize, and the numbers bear that out.

Customers won a prize in small pools 4 to 8 times more often than one would expect if our picks provided no edge.

Midsize pools

For pools in the 50 to 1000 entry range, pool win rates were a bit lower (61% to 76%), as expected, but still extremely good. Our customers won a prize in over half of their pools of 251 to 1,000 entries.

That success came despite our Best Brackets for many pools in this size range having Gonzaga as their champion pick.

This outcome illustrates the value of our supplemental Alternative Brackets. For pools of this size, when Gonzaga was the champion in our Best Bracket, most of the time we suggested North Carolina as the champ pick in the first Alternate Bracket (and often in several alternate brackets).

On average, users in pools of this size played 3 brackets in their pool, so we suspect those 2nd and 3rd brackets were a big factor in their success.

Large pools

Once we reach pools with more than 1,000 entries, raw win rates drop substantially, but the edge remains high.

After all, winning a prize in a huge pool requires nearly everything breaking your way, including the distribution of opponent picks.

Still, payouts are generally high enough that winning a prize 25% of the time (as reported by our customers in 1,000 to 9,999 person pools, and over 14x the expectation going in) should be very profitable over the long run.

Results by scoring system

With hundreds of different scoring systems entered in our system by customers, we have to group them into broad categories here.

In the table below, we grouped pools by whether the points awarded for every correct pick (“base scoring”) took into account a winning pick’s seed number or not. If not, we then subdivided based on whether they awarded upset bonus points or not.

Finally, we split out the most popular 1-2-4-8-16-32 round-based scoring system.

Based on these categories, our performance was solid across the board. This is great to see, as it means our customers’ success was likely driven by solid core picks across all brackets. That, in turn, means that our accurate Survival Odds probably provided a significant chunk of our edge this year.

In other words, our brackets weren’t better solely because of our advanced bracket optimization algorithm for custom pools, but also because we had better tournament predictions to start with.

Scoring Type Expected To Win A Prize Actually Won A Prize Win Rate vs. Expectation

Round-Based (1-2-4-8-16-32) 10% 67% 6.6x

Round-Based (Other) 10% 78% 7.8x

Round-Based w/ Upset Bonus 11% 75% 7.0x

Seed-Based 11% 79% 6.9x

Grand Total 10% 72% 7.0x

Results by number of brackets entered

In theory, as a smart player enters more brackets into a specific pool, two things should happen:

- Their chance of winning a prize should increase

- Their overall edge against the competition should decrease

To explain the second point, consider that for any type of bracket pool, there will be one combination of picks that gives you the absolute best chance to win (i.e. the maximum edge over your opponents). A smart player does their best to identify that bracket, and play it as their first entry.

By definition, then, any additional brackets the smart player enters, assuming those brackets have some different picks than the first bracket, would be not quite as likely to win compared to the optimal, first bracket played.

Playing more brackets in a pool therefore should increase your overall odds of winning a prize, but your expected return on investment also decreases a bit, since you’re paying the same price to enter the pool with a second, third, etc. bracket that is not quite as good as the first bracket you entered.

That’s roughly what we saw in 2017. The main exception is that playing a third bracket didn’t seem to increase our customers’ chance of winning, and, counterintuitively, playing more than three brackets actually lowered the chance of winning.

Number of Brackets Entered Expected To Win A Prize Actually Won A Prize Win Rate vs. Expectation

1 8% 68% 8.5x

2 12% 81% 6.9x

3 13% 82% 6.1x

more than 3 15% 64% 4.4x

Grand Total 10% 72% 7.0x

Why would we see that pattern? It turns out there’s a simple explanation.

It’s mostly an artifact of lumping all pool sizes together. Customers entered more brackets in larger pools, which makes sense. And, of course, win rates are lower in larger pools, because there’s more competition.

This becomes evident if we look at the numbers again, but this time take pool size into account:

Pool Size: 50 or fewer

Number of Brackets Entered Expected To Win A Prize Actually Won A Prize Win Rate vs. Expectation

1 12% 83% 7.1x

2 18% 90% 4.9x

3 24% 96% 3.9x

more than 3 32% 87% 2.7x

Grand Total 15% 86% 5.7x

Pool Size: 51 to 250

Number of Brackets Entered Expected To Win A Prize Actually Won A Prize Win Rate vs. Expectation

1 4% 57% 15.9x

2 7% 77% 11.0x

3 10% 84% 8.0x

more than 3 17% 79% 4.7x

Grand Total 8% 71% 9.2x

Pool Size: 251 to 9,999

Number of Brackets Entered Expected To Win A Prize Actually Won A Prize Win Rate vs. Expectation

1 1% 38% 30.2x

2 4% 69% 18.1x

3 5% 68% 12.5x

more than 3 7% 61% 8.2x

Grand Total 4% 53% 13.5x

The data above is a bit noisy since some of these bins have a pretty small sample size; not many people enter more than 3 brackets in a pool that has fewer than 50 total entries, for example. Still, a couple trends seem evident:

- In small pools, even our Best Brackets were enough to get prize win rates over 80%, and there’s just not a lot of room for improvement above that.

- In medium and large pools, there was a big jump in win rate from entering a second bracket, but the difference between 2, 3, and 4+ brackets is mostly statistical noise. We suspect that’s because for most pools, one of the top two suggested brackets already featured UNC beating Gonzaga in the title game. If that one wasn’t good enough to win a prize, then the third, fourth, and fifth options also likely weren’t.

Results by individual customer

The previous section explored our results on a pool by pool basis. But what’s more interesting to many people is the percentage of our customers that won a prize in at least one pool.

A side note on diversification strategy

Data from our customers indicates that for most people, winning something in pools, on a more frequent basis, tends to be valued more than winning a big prize once in a long while. As a result, we encourage customers to diversify picks across their pools, if they enter more than one pool.

For example, imagine a customer has one entry in each of three pools, and wants to maximize their chance of making at least some money during March Madness. In that case, using one of our recommended brackets with a North Carolina champion pick, another with a Gonzaga champion pick, and a third with a Kansas champion pick would be a better strategy than using three brackets that all had Gonzaga as their champion.

The result of a “diversified portfolio” approach like the one above should be that a customer’s average pool win rate decreases slightly, but their chance to win a prize in at least one pool increases. Put another way, this strategy reduces the extremely high variance inherent in bracket pool contests.

In the tables below, we combine the effects of pool size, number of pools entered, and number of brackets entered in each pool to get the expected chance of an NCAA Bracket Picks subscriber winning a prize in at least one pool in 2017.

We then compare that expectation to the percent of customers that actually won a prize in at least one pool.

Results By Number Of Brackets Entered Across All Pools

# of brackets entered across all pools Expected To Win A Prize Actually Won A Prize Win Rate vs. Expectation

1 9% 83% 9.3x

2 16% 93% 5.8x

3 19% 94% 5.0x

4 23% 91% 4.0x

5 21% 89% 4.3x

6 to 10 30% 97% 3.2x

more than 10 38% 90% 2.4x

Grand Total 20% 91% 4.5x

As with the above pool-based analysis of the number of brackets entered, you’d expect that the more brackets a customer entered across all pools, the higher their expected and actual rates to win at least one prize were.

However, we again see that the win rates plateau after the second bracket. Similar to the discussion above, we think this is simply because:

- More brackets generally means larger pools, which are more difficult to win, and

- By the time a customer has entered a second bracket, there’s a good chance they’ve already found a UNC-over-Gonzaga bracket.

We have the data to account for that first bullet point, so let’s do that.

Results By Customer Expected Prize Win Rate

This next table groups customers by their expected chance to win at least one prize in at least one pool (assuming all entries in each pool have an equal chance).

When we say a customer had a 50% chance to win a prize, that’s without using our pick suggestions, and assuming everyone in every pool is equally skilled. So a customer with a prize chance that high is either entering multiple brackets in a pool, entering multiple pools, or entering very small pools, or (most likely) doing more than one of those things.

We were a bit worried that our pick suggestions would have trouble improving such already-high odds, but it turned out we shouldn’t have been concerned:

Customer "Chance To Win At Least One Prize" Bin Expected To Win At Least One Prize Actually Won At Least One Prize Win Rate vs. Expectation

less than 5% 3% 69% 24.0x

5% to less than 10% 7% 90% 12.2x

10% to less than 20% 14% 92% 6.4x

20% to less than 30% 25% 96% 3.9x

30% to less than 50% 38% 99% 2.6x

50% or more 63% 98% 1.6x

Grand Total 20% 91% 4.5x

These results show exactly the trends that we expect. As our customers’ baseline expected chances to win a prize went up, their actual win rate increased, while the relative edge they get from our pick suggestions decreases.

Some of that is simply a ceiling effect. When a customer starts with a 63% chance to win a prize [see the “50% or more” line], the maximum theoretical ratio of actual win rate to expected win rate is only 1.6x. In that context, we’re … uh … very happy with a 1.6x rate.

Of course, we’re also extremely pleased with the first line of the table. For customers with a baseline expectation of only a 3% chance of winning, our picks this year raised that to 69%!

To put that 3% in perspective, here are some actual scenarios from that bin, in which our customers won prizes:

- 1 entry in a 200-person winner-take-all pool

- 1 entry in a winner-take-all 10000-person pool, and 1 entry in a 10000-person pool that pays top 10

- 10 entries each in two winner-take-all 10000-person pools, and 1 entry in a 50-person pool that pays top 2

- 5 entries in a single 1500-person pool that pays top 10

- 1 entry each in three different winner-take-all pools with sizes of 30, 120, and 10000

- 1 entry in a winner-take-all 5000-entry pool, 1 entry in a 225-person pool that pays top 3, and 1 entry in a 300-person pool that pays top 3

- 1 entry in a 500-person pool that pays top 10

These customers above should each expect to win a prize less than once every 20 years (and only once every 200 years for the customer in the first bullet). Every single one of them won a prize this year using our brackets.

Closing thoughts

When evaluating bracket pick performance, it’s imperative to understand the nature of bracket pools. You’re pretty much never expected to win — but when you do win, the return you earn more than makes up for multiple past losing entries.

If you use the right strategies and commit to playing for the long term, the expected returns from bracket pools are extremely compelling. That’s why we’ve made pool picks an area of focus for TeamRankings, even though we know that the best advice in the world still won’t generate pool wins every year.

As objectively as we can measure, our customized bracket picks delivered an extremely strong edge to our customer base as a whole in 2017. On average, our customers were 4.5 times as likely as expected to take home at least one bracket pool prize come April, and the year’s worth of bragging rights that come with it.

Throw in the fact that our subscribers outsource all of the time and stress of bracket pick research to us — especially for pools with uncommon or downright crazy scoring systems for which it’s much more difficult to optimize picks — and we think the long term value proposition clearly passes the test.

Of course, even after one of our best years ever, we also realize that not all of our customers were happy with our brackets in 2017. Some of our subscribers only played one bracket in a large or mid-sized pool and may not have come that close to winning a prize, or maybe their pool(s) used a particular scoring system for which our picks didn’t happen to do well this year.

As much as it bothers us, that fact is an inevitable reality of the business of selling bracket advice. Playing in bracket pools is a risky business, and our sophisticated approach to customize picks for each customer means that two individuals may have vastly different outcomes in the same year. In the long run, however, using a more sophisticated strategy for picks will pay off.

Longer Term Performance

We’ve been tracking customer results via survey for three years now, and 2017 was the best year yet. But every year has shown positive returns:

Results By Pool

Year Expected To Win A Prize Actually Won A Prize Win Rate vs. Expectation

2015 10% 14% 1.4x

2016 11% 24% 2.3x

2017 10% 72% 7.0x

Average 10% 37% 3.5x

Results By Customer

Year Expected To Win At Least One Prize Actually Won At Least One Prize Win Rate vs. Expectation

2015 19% 31% 1.6x

2016 20% 41% 2.0x

2017 20% 91% 4.5x

Average 20% 54% 2.8x

Our commitment, as always, is to keep improving and refining our methods every year, so that we offer our customers the best possible chance to win, and deliver a the best possible ROI over the long term.

Since we pioneered data-driven bracket picks and analysis tools over fifteen years ago, our success has cultivated a base of loyal customers who are winning bracket pool prizes much more often than they used to win, and much more often than they are expected to win. That’s the only reason why we’ve been able to build a successful business.

Appendix 1: How we define success

As you’ve likely surmised if you’ve read this far, we really only care about one metric when it comes to measuring the success of our bracket picks: How often do our customers win bracket pool prizes using our picks?

There are a number of other objective metrics we could use to measure the performance of our bracket advice. We could look at how many picks our top brackets got right, how many points they scored, or their finishing percentile in a national bracket contest like ESPN’s Tournament Challenge.

But those metrics are secondary to our customers, and there is no consistent benchmark to measure against across years. Some years, getting two Final Four picks right qualifies as an outstanding performance; other years, you may need to get three Final Four picks right to have a shot at a top finish in your pool.

Perhaps more importantly, if your goal is to win a prize in a pool, avoiding picking all the most likely winners (and using a more of a contrarian picking strategy instead) is often the best move.

In the end, what matters most to our paying subscribers is winning prizes, and making a positive financial return on their investment in TeamRankings. So that’s what we measure.

Appendix 2: How we measure success

If you’re curious how we come up with the customer prize win rates and baseline expectations quoted in the post, here are some key details:

- To calculate expectations for prize wins, we assume that all competitors in customer pools are equally skilled. So presuming that no one uses our picks, each contestant in a 100-person, single-entry, winner-take-all bracket pool would have a 1% chance to win the pool.

- We adjust prize winning expectations to account for cases where our customers played multiple brackets in the same pool, or played in multiple bracket pools. (If the baseline expectations for winning a prize quoted in the post seem high, that’s why.)

- To get our picks, subscribers provide us with details about each bracket pool they intend to enter (e.g. scoring system, total number of entries, prize structure). After the tournament ends, we email them a custom-built survey to ask whether they actually played our picks in each pool, and if so, how they finished.

- This year, we collected the results of how our recommended brackets did in more than 2,000 real customer pools. As far as we know, no other site even comes close to measuring the real-world performance of their bracket advice on that scale.

Printed from TeamRankings.com - © 2005-2024 Team Rankings, LLC. All Rights Reserved.