Charting Screens In College Basketball (Stat Geek Idol 2 Finalist)

April 8, 2013 - by Joshua Riddell

This post is one of the five finalists in our second Stat Geek Idol contest. It was conceived of and written by Joshua Riddell (@Joshua_Riddell).

Teams have the ability to measure or quantify nearly every aspect of a basketball game in today’s game. With the use of Synergy Sports, they can easily pin down how they succeed on offense against man and zone defenses, how strong they are on the pick and roll, how their offense performs in transition and countless other scenarios. Many teams chart other aspects of the game themselves, including deflections, most famously done by Louisville and Indiana and recently profiled in this NPR article.

This begs the question; why don’t teams chart and quantify screens set by their players?

Synergy Sports can measure the effectiveness of players using screens but the action of setting the screens to free a teammate seems to be an undervalued commodity. Brett Koremenos first proposed this idea on Hoopspeak and gave some excellent reasons as to why teams need to begin measuring this crucial basketball action. The argument could be made that the primary role of a coach in the preseason is to establish roles for each team. If a player’s offensive role is established as a screener, he has little way of knowing how well he has done, nor does he have any attainable goals on how many screens to set in each game, even though his role could be a critical part of a successful offense. Screening is a skill and measuring it will encourage players to set their mind to improving this portion of their game.

If teams do chart and count screens set, they do not publicize this fact like some teams do with deflections, charges taken or other similar metrics. Coaching staffs see deflections as an integral part of a fierce defense and provide their players with concrete goals for this statistic and follow up by counting each deflection. While it could vary based on the offensive scheme, the argument could be made that screens are just as essential to an offense.

So why isn’t this aspect of the game given as much attention as other aspects? And, if teams do chart screens, could this exercise provide any value to help the team’s success on the offensive side?

Charting Screens

I wanted to see if charting screens could provide any usable information for a coaching staff. To do so, I charted three games for the top two offenses in 2013, as measured by Ken Pomeroy’s offensive efficiency, Michigan and Indiana.

To chart screens, you have to accept that there is a large measure of subjectivity in determining what is involved in a countable screen. What one person sees as an effective screen may not be the same as someone else. Here are a few points I tried to adhere to when charting screens:

-

All screens that I deemed to be effective were counted, even if it did not result in an immediate basket, shot or pass to the player using the screen. If the player was open after using the screen, I counted it, even if nothing happened as a result of the screen.

-

I counted screens that gave the teammate space, even if the screener did not make contact with the defender. If the screen was effective enough to get his teammate open for a possible drive, shot or received pass, I gave the screener credit as I determined that the screener did his job.

-

I generally did not count screens on baseline or sideline out of bounds plays, unless the play led directly to a basket.

-

All possessions were charted in this exercise, which includes backcourt turnovers and fast breaks.

Michigan Screens

Michigan is an interesting first case for this exercise, as their offense does not rely on setting screens to get players open regularly. Instead, they rely more on cutting and individual dribble penetration by their guards to give their players good looks at the basket. The majority of their screens come from the big men on pick and roll screens, as their guards rarely set screens.

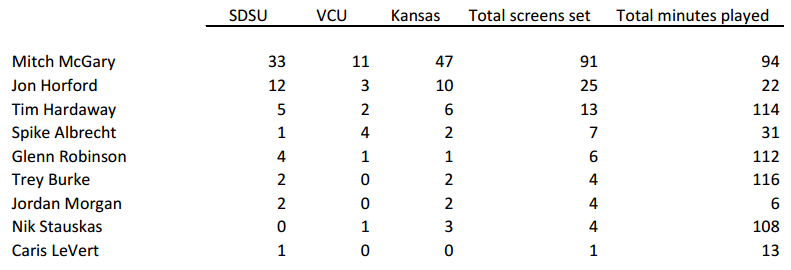

In charting three games against South Dakota State, VCU and Kansas, I counted the following screens, with the minutes played added for reference. (Just click the table to enlarge it.)

McGary clearly deserves a ton of credit for his screening. He had two noticeable big screens, one in the VCU game and one in the Kansas game, but he was a workhorse throughout the two games and his contributions on both ends of the floor are a big reason Michigan has advanced to the Elite Eight.

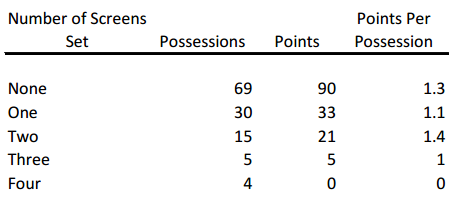

As expected, there is no noticeable benefit from setting more screens per possession, as offensive efficiency does not increase as the number of screens increase in this small sample. Even over a larger sample, I would assume there would be no linear relationship between screens set and points per possession.

Michigan would benefit most from charting by tailoring this exercise to track which player sets the most effective ball screens and utilize this player more often in that role. By giving their big men an attainable goal to reach of effective screens set, they would focus more on setting screens and freeing their teammates. This would mean their dynamic guards would be more dangerous by improving the ball screens they use to create off the dribble.

Indiana Screens

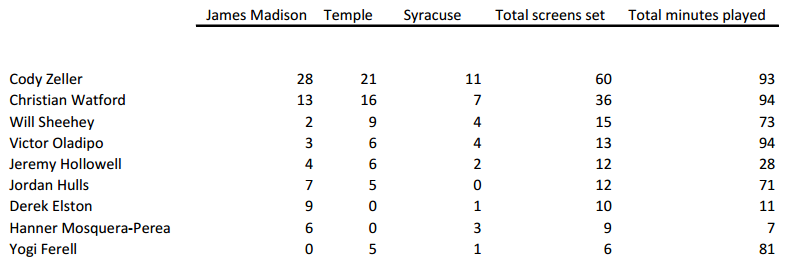

Indiana’s offense is built more on screening than Michigan’s and we see that both in the number of screens set and the players who set the screens. While Michigan’s guards rarely set screens, Indiana’s guards screen on a regular basis as shown by the data from their three tournament games. Indiana’s big men still set the most screens on the team, but the guards get in on the screening action as well. (Just click the table to enlarge it.)

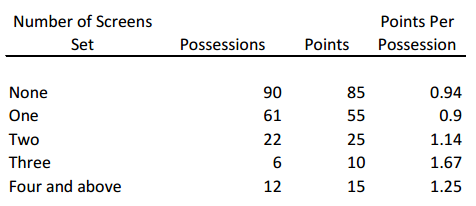

The Syracuse zone played a major role in skewing this data downward, as they set only 33 screens against the zone. Just looking at the first two games, Indiana set 1.1 screens per possessions in these games, which decreases to 0.9 screens per possessions when incorporating the Syracuse game.

The points per possessions slowly trends upward for Indiana in this sample but I would expect the data to look similar to Michigan’s over a larger sample size. Even with the different styles of offense, I don’t foresee a trend to appear showing that offenses perform better based on the number of screens set per possession.

Is The Data Useful?

After charting these six games, there are definitely some uses for this statistic but also some words of caution. This is a small sample of games, so looking at how the offense performs on a per possession basis based on screens set should already be taken with a grain of salt and I think it should be taken lightly, if emphasized at all, on a larger scale. Each team’s sample is further skewed by opponents who forced them to change their offense; Syracuse by playing their zone defense and VCU by forcing an up-tempo transition game. The data shows that there is little correlation between screens set and points scored and I expect that lack of correlation to continue as the sample increases.

Providing the offense with this information runs the risk of turning them into robots by screening just to screen and counting the screens before they look for a shot. Coaches need to be wary when citing this information or they run the risk of bogging down their offense as players focus too much on the number of screens set.

Where this information will be useful is on the individual level and counting the number of screens set by each player. Players will be able to take pride in their screening ability, which in turn, should make offenses run more fluidly as offensive players will be more open after using better screens. Bonus points could be given to the screener for points scored directly off a screen or ‘pancake screens’, as demonstrated by McGary in a screen that took over my Twitter feed in the VCU game.

The Future Of Charting Screens

It’s been shown that players will always base their performance on measurable statistics. Players have been known to not take long-range shots at the end of halves (or NBA quarters) to not harm their field goal percentage. By charting and counting screens and turning it into a counting statistic, you will give players an incentive to become better screeners, by giving them a measurement by which they can base their success in this part of their role in each game. This should make offenses more fluid, leading to easier baskets as players begin to take more pride in their screens.

Teams could modify the charting system based on their own preferences and needs for their offense. They could only chart screens that lead directly to baskets to focus their players on setting good screens and not just setting half-hearted screens to boost their numbers. If they want to dive into team data, they should consider charting only half-court possessions, as charting all possessions tend to skew this number away from the goal of this project. They need to ensure that they chart only what they deem to be acceptable screens, so players do not breeze through screens just to boost numbers but focus on setting a strong screen to free their teammate.

There will likely never be a perfect way to measure screens nor will there ever be a uniform way to measure screens, allowing comparing of players and teams across the country. Teams’ mileage will vary on the usefulness gained from this procedure but the main benefit will certainly be from measuring individual player’s screens and not from measuring the screens per possession and any resulting offensive efficiencies. The hope would be that measuring screens gives the screener a quantitative measurement that players can reach, causing them to set better screens for their teammates. It’s unlikely that this solves the scoring problem, but hopefully it could be one step in the process.

Printed from TeamRankings.com - © 2005-2024 Team Rankings, LLC. All Rights Reserved.