Does Past NCAA Tournament Experience Lead To March Success? The Data Says…

February 24, 2012 - by John Ezekowitz

As the calendar turns quickly towards March, certain narratives become more and more prominent. One of those narratives is about the importance of experience.

Experience, we are told over and over, is important because “guys who have done it before will play better when it matters.” Others make arguments based on the importance of continuity in a team. Some appeal to the obvious fact that players get better over the course of their career.

I believe that unlike many other sports narratives, the experience argument has value. Like any good quantitative analyst, however, I thought we should test it.

Predicting Efficiency Using Returning Minutes

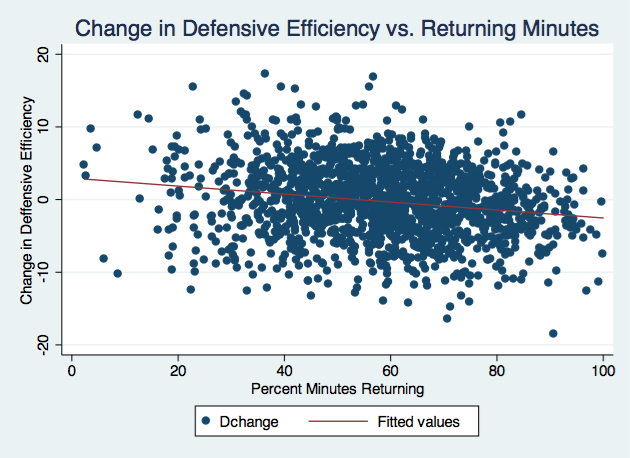

I decided to measure experience by using a team’s percent of minutes returning from the prior season. Using five years of data, I wanted to look at whether returning minutes predicted offensive and defensive efficiency. First, let’s look at the raw correlations:

Clearly more returning minutes is associated with better play in the subsequent year. (The correlation between the change in Offensive Efficiency from Year 1 to Year 2 and the percent of returning minutes in Year 2 is .36. The corresponding correlation for change in Defensive Efficiency is lower, around -.19.)

As you can see from the graphs (apologies on the number of data points), the variance of these outcomes appears to go down as returning minutes increases. This makes sense: teams with fewer returning minutes should have a wider range of outcomes as compared to the previous year’s squad as there is much less in common between the two teams.

Adding Talent Baseline Into The Mix

This basic analysis is a good start, but we also need to consider the initial level of a team’s performance. There is a lot more room for a mediocre team that returns 90 % of its minutes to improve as compared to an elite team that also returns 90% of its minutes.

To look at the importance of returning minutes in this context, I attempted to predict a team’s Offensive Efficiency via regression.

[Regression analysis is a technique that allows us to understand what factors are useful in predicting some other value. The regression takes as inputs a team’s performance in the previous year and their returning minutes, with the output being offensive efficiency in the next year.]

Predicting Offensive Efficiency from Previous Season Stats

| Term | Coefficient | Standard Error | T Stat |

|---|---|---|---|

| Previous Def. Eff. | -0.223 | 0.023 | 9.74 |

| Previous Off. Eff. | 0.381 | 0.054 | 7.10 |

| Returning Minute % | -0.301 | 0.086 | 3.51 |

| Ret Min * Prev. Off. Eff. | 0.004 | 0.0008 | 4.85 |

| Constant | 78.9 | 6.54 | 12.06 |

R^2 for this regression was 0.675

As you can see, returning minutes is an important factor in predicting offensive efficiency. The T stats being larger than 2 illustrates that the coefficients are all statistically significant. The coefficients themselves tell us how strong the relationship is between each stat and the next year’s efficiency. For example, if Team A was 10 points better on offense than Team B in the previous year, we would expect them to be 3.8 points better in the subsequent year, all else being equal.

The interaction term (Ret Min * Prev. Off. Eff.) shows that returning minutes, which is a positive factor in predicting efficiency, is less of a positive when the team was very efficient in the previous season.

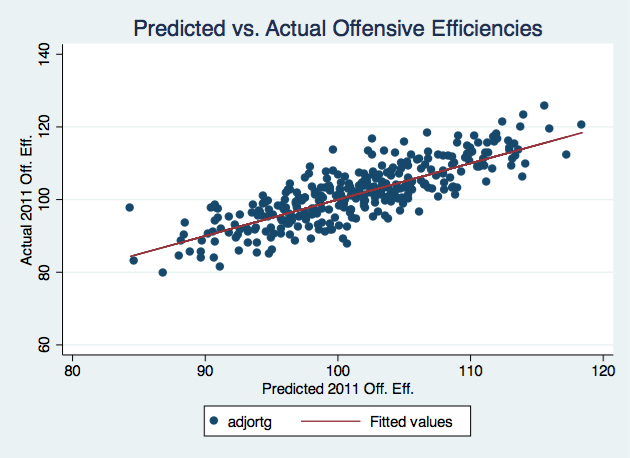

When I took the 2011 season out of the dataset, ran the regression again, and used it to predict 2011 offensive efficiencies, the correlation between the actual and predicted offensive efficiencies was 0.81. The results are shown in the chart below:

The red 45 degree line represents a perfect prediction. As you can see, the model does a fairly good job of predicting offensive efficiency.

In other words, Knowing a team’s performance last year and how many minutes they are returning gets you much of the way towards predicting their next season’s offensive efficiency. (Add in recruiting info, and you’ve got most of the inputs to our preseason ratings projections.)

As the above results show, returning minutes are a very important positive factor in predicting performance. This lends credence to the “experience” narrative: it does seem that, in general, experience is positively associated with future performance.

But what about March Madness specifically?

How Much Does Experience Matter In March?

To examine this question, I took the last six years of NCAA tournaments and our own Predictive Power Ratings from before each tournament, and used a regression technique called an ordered probit.

The ordered probit is fairly complex, but the basic idea is that it breaks the tournament into segments, in this case rounds, and allows us to see what factors predict advancing from round to round (i.e. winning).

Here, the Experience term is the number of games a team played in the NCAA tournament in the previous year multiplied by the percent of minutes that team returns. In other words, I’m looking at the predictive power of NCAA tournament experience, not just game experience in general.

| Term | Direction of Coefficient | Significance |

|---|---|---|

| Predictive Power Rating | Positive | 95 percent level |

| Strength of Schedule | Negative | 99 percent level |

| SOS * Predictive Rating | Positive | 99 percent level |

| Experience | Positive | 95 percent level |

| Consistency | Negative | 95 percent level |

I have not included the coefficients because ordered probit is a specifically difficult regression to interpret, but the direction is still important.

There are a couple interesting results here — we see diminishing returns to Strength of Schedule (the negative term in the interaction effect), and Consistency actually has a negative effect — but more importantly for this discussion, we see that previous NCAA tournament experience is an important positive predictor of future NCAA tournament success.

An important takeaway, though, is that it is not experience alone that presages better performance. Returning everyone from a mediocre team does not transform it into a world beater.

So when you start hearing about the importance of experience in March, remember that experience is important, but talent is important, too.

Printed from TeamRankings.com - © 2005-2024 Team Rankings, LLC. All Rights Reserved.